How Simple Rules Create Complex Behavior

Have you ever watched a flock of birds pivot in perfect unison, as if guided by a shared mind? Or admired the pattern of a seashell and felt as though every curve and pattern had been deliberately designed? We are naturally inclined to interpret sophisticated behavior as evidence of intention, planning, or collective awareness. The world is full of systems that produce strikingly complex outcomes without any master plan at all.

Again and again, nature, mathematics, and even simple machines show us the same lesson: remarkably intricate behavior can emerge from the repeated application of very simple rules. What looks purposeful from the outside may be nothing more than the byproduct of local interactions unfolding over time.

A Small Machine That Looks Almost Alive

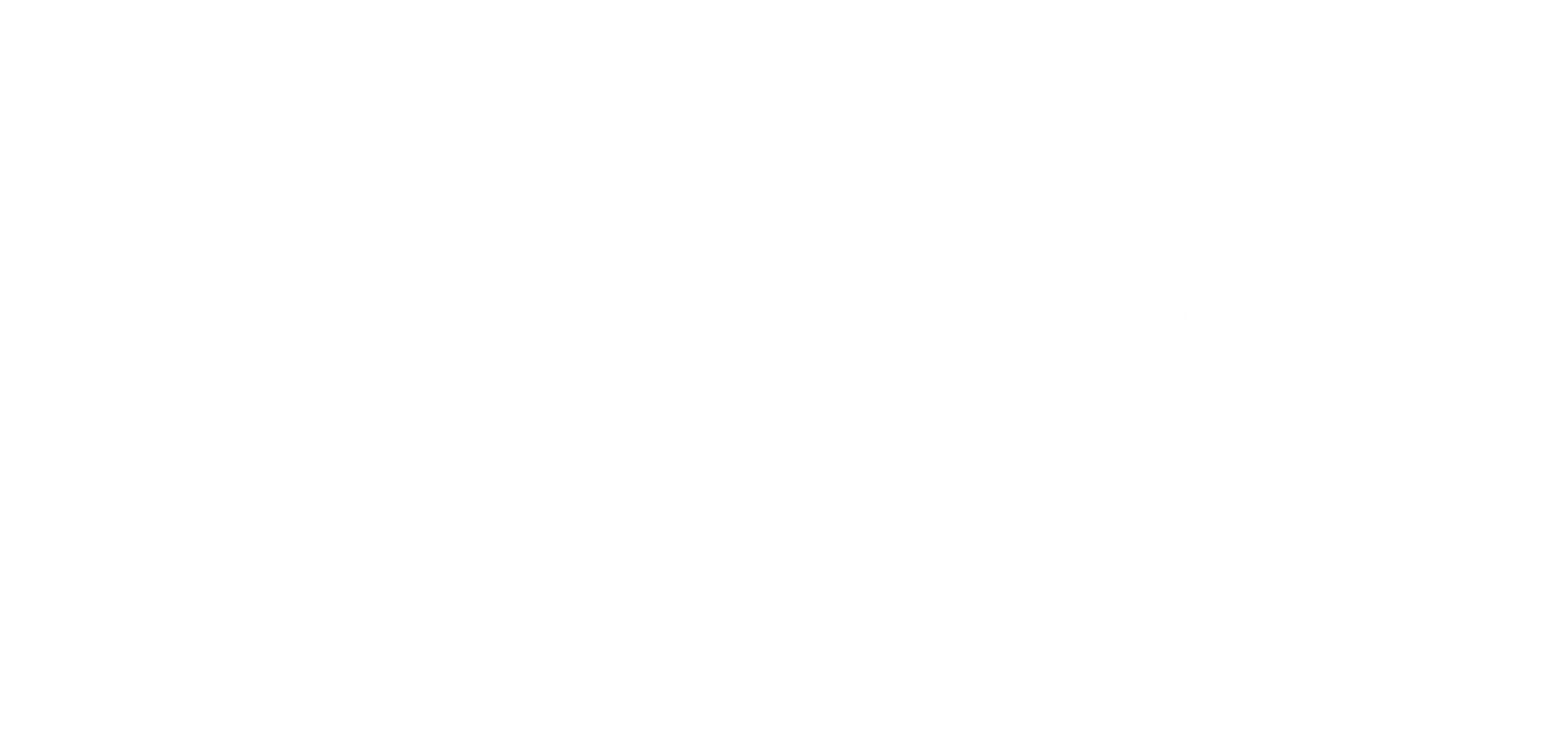

One of the clearest illustrations of this idea comes from Valentino Braitenberg’s Vehicles: Experiments in Synthetic Psychology. Braitenberg imagined tiny self-driving machines built from almost comically simple components: a light mounted on the rear, two light sensors at the front, and a wiring scheme connecting each sensor to the opposite wheel.

The behavioral rules are minimal:

- If the right sensor detects light, the left wheel speeds up, causing the vehicle to turn right.

- If the left sensor detects light, the right wheel speeds up, causing the vehicle to turn left.

- If both sensors detect light equally, the vehicle moves straight ahead.

That is essentially the whole “mind” of the machine.

Now imagine placing one of these vehicles in the corner of a square room, with its rear light facing outward. Introduce a second vehicle, and it will move toward the strongest light source it detects: the first vehicle’s rear light. It collides with the first and becomes stuck in the corner as well, its own rear light now exposed to the room. As more vehicles enter, they too are drawn toward the growing cluster. Over time, a pileup forms in the corner, each trapped machine unintentionally acting as a beacon for the next.

Then something unexpected happens. A later vehicle enters with slightly different momentum or from a different angle. It crashes into the cluster and dislodges one or more of the trapped vehicles.

To an outside observer, this might look intentional. It might seem as though the newcomer “rescued” the others. But nothing of the sort occurred. No vehicle understood the situation. None formed a plan. None intended to free anything. The apparent rescue was simply the result of a very simple rule interacting with a changing environment.

That is the illusion of intention: behavior that appears purposeful, even intelligent, emerging from a system that contains no purpose at all.

Why We So Easily See Purpose

Braitenberg’s thought experiment is powerful because it exposes a deep human habit. When we see order, coordination, or adaptive-looking behavior, we instinctively infer hidden intelligence behind it. We assume there must be an internal model, a strategy, or some higher-level control.

Yet many systems do not work that way.

Sometimes what looks like planning is just feedback. What looks like cooperation is just alignment under shared constraints. What looks like intelligence is simply the accumulation of many small rule-based interactions.

Braitenberg explored this idea further by imagining other sensor-motor configurations that give rise to behaviors resembling attraction, avoidance, aggression, or even affection. To an observer, the machines can appear emotional or goal-directed. In reality, they are only following wiring patterns.

The sophistication is in the behavior, not in the internal complexity of the agent.

From Toy Vehicles to Emergent Worlds

This principle extends far beyond hypothetical robots.

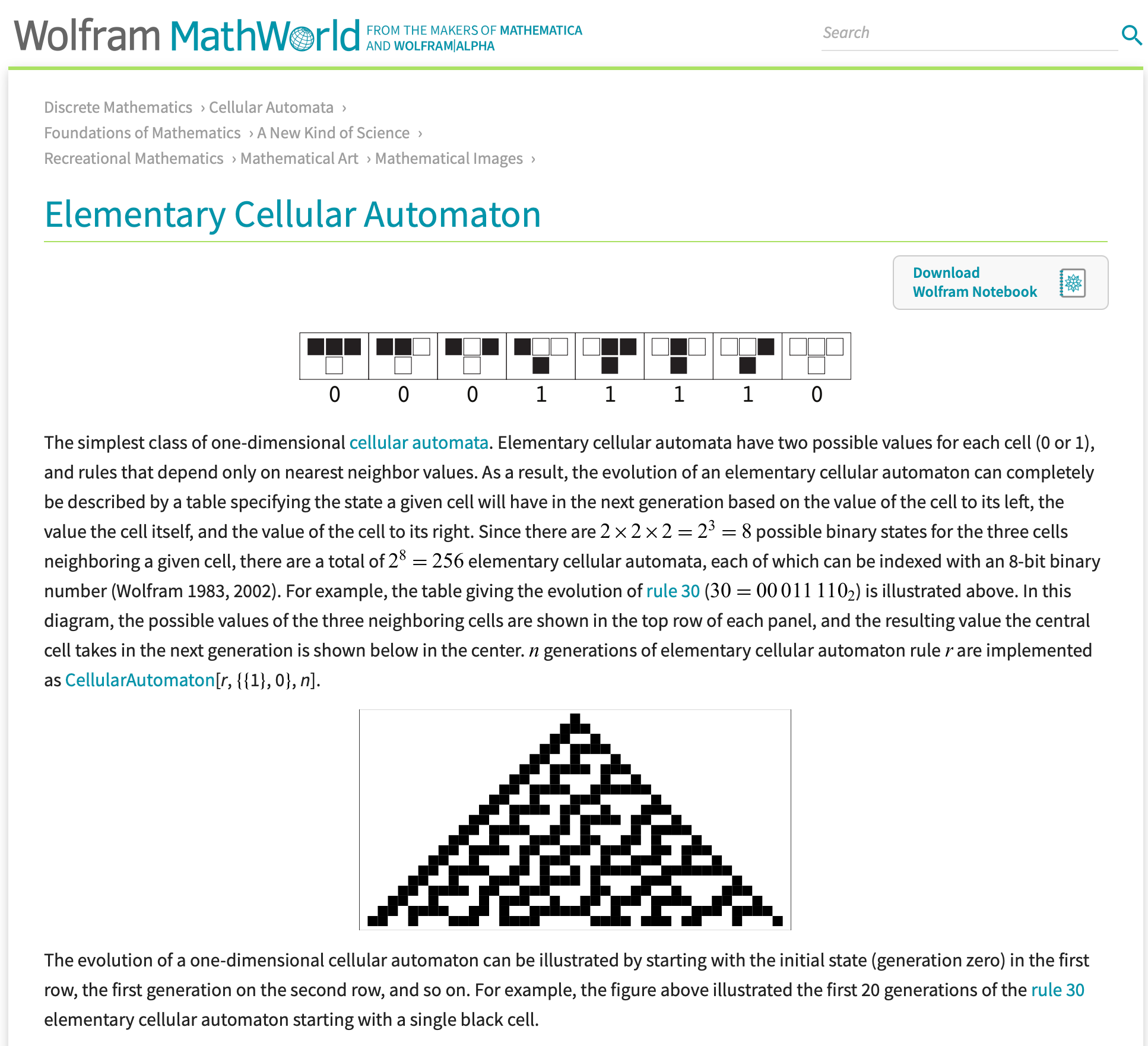

Consider cellular automata, especially Conway’s Game of Life. In this system, each cell on a grid is either “alive” or “dead,” and its next state depends on a few simple rules based on neighboring cells. There is no central controller, no blueprint, and no awareness anywhere in the system.

And yet from those simple update rules emerge patterns that seem astonishingly rich: structures that move, oscillate, replicate, and interact in ways that can appear almost organism-like.

The same logic appears in the natural world. Spirals in shells, branching in trees, pigmentation on animal coats, and other recurring biological patterns often arise not because each detail has been explicitly designed, but because local growth rules, chemical gradients, and physical constraints repeat over time. This is one of the most important ideas in biology: simple components following simple rules can generate outcomes that are far more elaborate than the rules themselves would seem to predict.

I have my own small memory related to this area of complexity. When I was around fifteen years old, I went scuba diving in Hawaii and found a stunning Conus textile shell deep underwater. It appeared empty, so I (stupidly) slipped it into my scuba vest as a treasure. Only afterward did I learn that it was not empty at all... the snail was still inside. More alarming still, Conus textile is a venomous cone snail, part of a group known to be dangerous to humans and associated with fatal stings. What I had casually handled as a beautiful object was in fact equipped with an extraordinary weapon. Even so, what stayed with me most was the shell’s appearance—so ornate, so precise, so uncannily intentional-looking that it seemed more like a work of art than the product of natural rules. I promptly returned the shell to the ocean, but its striking appearance was forever etched into my memory. When I later learned about cellular automata and complex systems, I felt so lucky to have encountered this spectacular snail, and felt grateful for my survival!

The Real Lesson: Emergence

Understanding emergence helps us resist one of our most tempting interpretive errors, which is assuming that complexity requires intention. It does not always.

Sometimes there is no conductor behind the flock, no strategist behind the pattern, no inner narrative behind the behavior. Sometimes there is only a system of interacting parts, each obeying a narrow rule, collectively producing something that feels much richer than any one part could explain.

This matters in fields ranging from biology and neuroscience to artificial intelligence and social systems. If we mistake emergent behavior for deliberate design, we risk misunderstanding how systems actually work. We may invent hidden motives where none exist, or overestimate the sophistication of the underlying mechanism.

The challenge, then, is not simply to marvel at complexity, but to ask what rules might be generating it, because sometimes the most lifelike, intelligent-looking, or coordinated behavior in the world is not the product of intention at all—it is the product of simple rules in repetition.

References

Braitenberg, V. (1984). Vehicles: Experiments in Synthetic Psychology. MIT Press.

Thompson, D’A. W. (1992). On Growth and Form. Dover Publications. (Original work published 1917)

Written with the assistance of ChatGPT 5.2